Second Rodeos

Every tool you master will be replaced. The navigation that chose the tool will not.

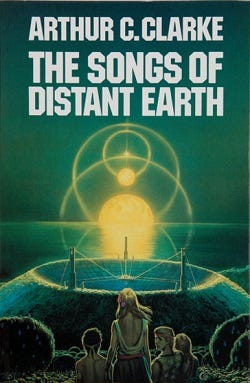

“This was the fundamental problem with rockets—and no one had ever discovered any alternative for deep-space propulsion. It was just as difficult to lose speed as to acquire it, and carrying the necessary propellant for deceleration did not merely double the difficulty of a mission; it squared it.”

― Arthur C. Clarke, The Songs Of Distant Earth

In Arthur C. Clarke’s The Songs of Distant Earth, the last colony ships to leave a dying Earth arrived at their destinations almost as quickly as the first ones. The early ships launched with the best propulsion humanity could build, crawled across interstellar space for generations, and arrived after decades of sacrifice. The later ships launched years afterward with vastly superior engines and nearly caught up. The early adopters paid the highest price for the longest journey. The late starters traveled in comfort and arrived at almost the same time.

Clarke meant it as a quiet tragedy. I read it as a model for why frontier sacrifice is a necessity. He published The Songs of Distant Earth in 1986. TCP/IP was only adopted widely 3 years earlier, putting the birth of the Internet as we know it in 1983. But somehow the story is more precise now than it was then, because Clarke predicted, but didn’t live to see, the leaps that the advent of accessible AI has given us.

The companies building AI-native products right now are the early colony ships. They launched six months ago with the best tools available. They are in transit. And the tools they used to launch are already being surpassed. Someone who starts building next quarter with the next generation of models will arrive at nearly the same destination having spent a fraction of the effort. The scaffold I built to help AI agents understand a codebase? The next model will do that natively. The research transformation engine I demoed live on a Rosenfeld stream? Give it six months. Maybe less. I am building sand castles at the speed of the tide.

Already, when I started Investiture (codebase and repository scaffolding), The Box (keeping user research and insights as code), Labrador (durable memory and personality for an LLM), and Relic (smart virtual whiteboard plugged into your codebase), when I started those projects 3 months ago, they were relevant. Before I could even finish them, people with more of a head start on their knowledge have surpassed them.

So why launch at all? Why not wait for the faster ship? So why build things when even as I build them, I know they are becoming obsolete or worse. Why build sandcastles when the waves are actively crashing? We’re not talking about waiting for high tide; we’re talking about the water already up to our ankles and we’re still building.

Because the early colonists carried something the later ships did not. They knew what the destination looked like. They understood the terrain because they had lived on it, mapped it, argued about it for the entire journey while the fast ships were still being designed. The fast ships arrived with better engines and no knowledge of the ground.

Because I am not building code for products. I am writing code in my mind to make myself an instantly adaptable frontier builder. The sandcastles aren’t the product or the point. It is the muscle memory, the new ways of thinking, and the honing of my own mental skills that matter.

That is the only cargo that holds its value across the entire journey, no matter which engine you used: knowing where you are going and why. The passengers on those faster ships may get here easier, quicker, and with less risk. But, they don’t learn what it took to do it the hard way.

I am not trying to get to a destination, I am trying to make things as hard as possible on myself, to push as hard and as fast as I can not because I think it will work; it won’t. I do it because we are all running out of time and the only time to learn is right now.

The 9,000 Mile-Per-Hour Puck

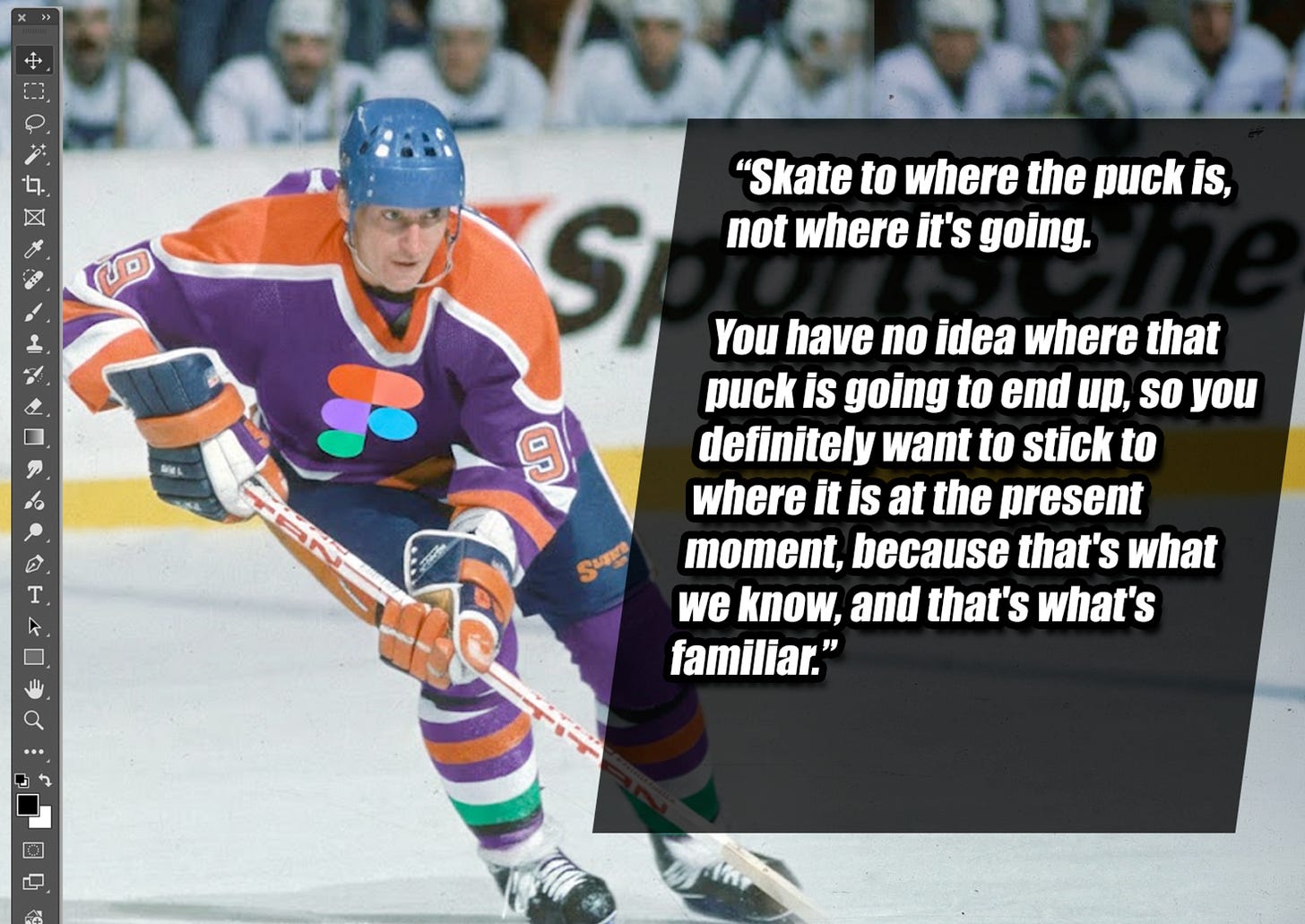

Everyone says skate to where the puck is going. Gretzky built a career on it. But Gretzky played a game where the puck moved at puck speed, where human reflexes could track the trajectory, where the boards of the rink bounded the possible.

This puck is moving at 9,000 miles per hour. By the time you see where it is headed and start skating, it has already passed through the boards, left the arena, and entered a different sport entirely.

Focusing on any specific tool, any specific model release, any specific technical capability is focusing on where the puck was. Not where it is. Not where it is going. Where it was, approximately thirty seconds ago, which in this landscape is ancient history. Even worrying about AI bubble, environmental concerns, ethics of the big companies and CEOs, of the state-sponsored actors, of governments, that is all where the puck is. Anthropic and OpenAI, maybe they are around in 5 years, maybe not. Maybe America is around in 5 years, maybe not. But, if I am still around, I want fungible, adaptive skills that don’t require any specific tool, model, or vendor.

I lived this already to a certain extent. Investiture, a methodology I developed for giving AI agents deep understanding of a codebase, was genuinely novel in January. By March, models were doing portions of it natively. The Box, a research transformation engine I demoed to an audience of researchers, was a breakthrough that felt like the future. Give it six months. Someone will paste research into a chat window and get comparable output without any custom tooling at all. These were not bad ideas. They were good ideas with a half-life measured in months.

They are all lily-pads, meant to hop to and stabilize on for a moment before they start sinking again and you have to keep jumping to the next. For all I know, by Christmas I will be building my products on a homelab running black-market Chinese models that cost me more than my entire electricity bill, but it is offset by the income I am able to produce to sustain the model and feed my family.

And what do you do when your inventions have a half-life of months? The industry’s answer is to build a tutorial economy. Learn this framework. Master this model. Here are forty-seven tips for prompting your way to productivity. The content has a shelf life of six weeks (which means you need another round in six weeks, which means the tutorial economy is self-sustaining and the learner never actually arrives). It is an ouroboros of currency masquerading as education. We not only cannibalize our children, but also our partners, friends, and family.

Skating to where the puck is going assumes the puck moves at human speed. It does not. So what do you track instead?

OS/2 Warp

You have to run two operating systems simultaneously. And if your brain works anything like mine, that sentence alone is enough to make your chest tight:

System 1: The Daily Cascade.

Learn the new tool.

Try the new model.

Adopt the new pattern.

Discard yesterday’s pattern.

Pick up tomorrow’s.

This system runs on a daily and weekly cycle. Everything in it is ephemeral. You have to do it because staying current is table stakes. But it is not the value. It is maintenance. It is keeping up with the tide, not building above it. Each tool is a disposable whetstone, you aren’t using the blade of your mind to sharpen the stones, you use the stones to sharpen your mind. Wet, hone, sharpen, polish, discard. You are the edge on the blade, not the tool or model or vendor.

System 2: The Planetfall View.

Look past the cascade.

Way past it.

Past the next model release.

Past the next funding round.

Past the next wave of tools that make the last wave irrelevant.

Ask: when we land on the planet, what does the surface actually need? What problems are real and which ones are artifacts of the current tooling? What will the people there require from us that has nothing to do with which engine we used to get there? Think about 5 years ago, before the AI explosion. What skills mattered? It wasn’t Figma. Now think 5 years from now. What skills will matter? I won’t be Figma. It won’t be design as we know it.

So what to do? My brain does not toggle. It locks on. There is a clinical word for it: monotropism, the tendency for autists to pour attention into a single deep channel rather than distributing it across many. Friends, family, love, self-care, bills, health… all irrelevant. The clinical framing is tidy. The lived experience is that every time I surface from deep work to check what shipped this week, to read the changelog, to test the new model, the thread I was holding snaps. And the thread was the valuable one. The thread was always the valuable one.(Honestly, some days the switching cost is not a metaphor. It is the tax I pay every single time for a brain that was not built to scan. It was built to dig.

For the first time in a decade, maybe more, I missed rent. I went overdraft. I take bills from the mailbox and throw them in the trash without even looking. Once the letters inside are on the pink or yellow paper, I’ll open them.

The world demands System 1 constantly.

Learn this.

Update that.

Retool now.

And the people selling System 1 knowledge have every incentive to keep the cycle spinning, because their product depends on the next version making the last one obsolete.

But the insight that neither system gives you on its own is the only thing I am sure of anymore. The only variable in this entire equation that is not changing at 9,000 miles per hour is the human. Human adaptation speed is constant. Human needs are constant. Human resistance to doing work they do not want to do is constant. Human desire to outsource effort to someone who knows the terrain is constant.

The technology is the variable. The human is the constant. Build for the constant.

Off By an Inch at Blastoff

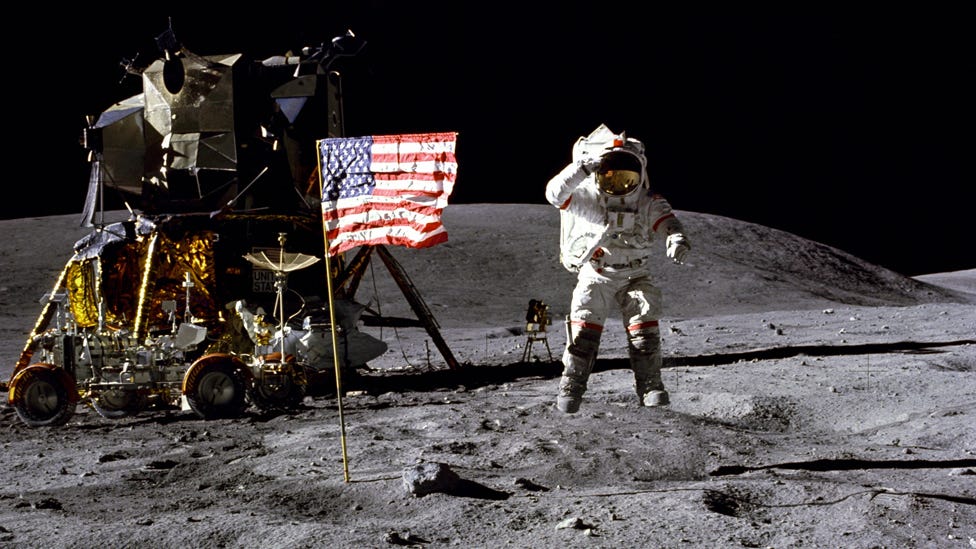

There is a navigational principle in rocketry that resolves all of this into a single image. See, when you launch a rocket, the alignment at the moment of ignition matters more than almost anything that happens afterward.

Off by an inch at planetfall, not a big deal.

Off by an inch at blastoff, you miss your target by a galaxy.

The error compounds across millions of miles of empty space. Every fraction of a degree propagates through the void until the spacecraft arrives somewhere its designers never intended. There is no correcting for it mid-flight once the original heading is wrong enough. Newton taught us this. Your enemy is inertia.

But off by an inch at planetfall? At the end of the journey, when you are already in the neighborhood of your destination? That is a course correction. A thruster adjustment. A Tuesday. Neil Armstrong landed the Apollo 11 lander 4 miles off target after travelling 240,000 miles.

He was 0.0017% off at landing. Reverse the metaphor, and they miss the Moon entirely and are never seen again as they starve to death somewhere out in the cosmos.

The upstream decisions (who is the customer, what is the problem, why does this matter, what should exist in the world) are the blastoff-inch decisions. Galaxy-scale consequences. Get those wrong and no amount of AI-powered development speed will save you. You will just arrive at the wrong planet faster than anyone has ever arrived at the wrong planet before. That is an expensive mistake at unprecedented velocity, and we are not prepared to build things in the era of AI after decades of the traditional workflows.

The downstream decisions (which framework, which model, which deployment target, which prompt pattern) are planetfall inches. They matter, certainly. They affect performance and cost and maintainability. And they are correctable. They change every week. Getting them wrong today costs you a day. Getting them right today saves you a day. Either way, the scale is small. Nobody misses a galaxy because they chose the wrong JavaScript framework.

You have spent the last year debating which AI model to use. That is a planetfall inch. Have you spent a single hour this year pressure-testing whether the thing you are building should exist at all?

Picking Claude over ChatGPT is not strategy. Choosing React over Svelte is not strategy. Building a product nobody needs, faster, with better architecture, is not strategy. Strategy is the blastoff heading: who is this for, why do they need it, and what happens when you are wrong. When you can AI engineer your launch from orbit (as I have written about) but are aiming for a start that went supernova centuries ago, what happens then when you arrive at your destinate, your customer or business, and find out it’s gone.

Arthur C. Clarke even has a story sort of about this called The Star where future priests take a ship to where a supernova would have been, and the twist is it is the one that showed the three wise men where to go to find Jesus thousands of years before. Clarke was an atheist, but the point remains. By the time the three wise men saw the Star of Bethlehem in the sky in that myth, it had been dead for 2000 years. Just like your business idea.

The entire industry is obsessing over planetfall inches. Which model benchmarks better. Which agent framework is more reliable. Which deployment pipeline shaves off milliseconds. Those are all System 1 questions. They matter and they are ephemeral.

The System 2 question is: are you pointed at the right star to land on the right planet?

That question has not changed since the first human made the first product for the first customer. It will not change when AI is doing 99% of the implementation. The 1% that remains (the blastoff alignment, the who and the why and the whether) is the human constant.

Most People Do Not Want to Build

But, no matter how easy building becomes, most people will not build. They will recalibrate. When building a product required a team of engineers and six months, people outsourced it. When building a product requires an AI agent and a weekend, people will still outsource it. Because they were never interested in the building. They were interested in the having built. The outcome. The business. The product in their customer’s hands. The building was always the part they wanted to skip. And that’s the neat part for people who do like building: it doesn’t matter how fast or easy it gets. Human beings cannot and will not change.

And this is not laziness, but rational specialization. Look, the person who is an expert in leadership coaching does not want to become an expert in React, Supabase, and AI agent orchestration. She wants a coaching product that serves her clients. The person running a senior care startup does not want to learn how prompt engineering works. He wants the product in his patients’ hands. Builders and those adept at creating tools to build tools don’t get this, but there is no better mousetrap. This is core Jobs to be Done, people hire the outcome, period. If I tell someone all they have to do is close their eyes and wish for their product to appear, they will Clockwork Orange themselves to avoid blinking.

And here is where the math gets interesting. Every time the tools get easier, more people see what is possible. More people attempt products. And more people attempting products means more people discovering they need help with the part that was always hard: knowing what to build and for whom. The judgment that exists outside of AI for the moment. And I don’t mean taste, I mean the objective gap that no tool can cover, ever, for any reason. Even the most automated of looms requires someone to want a quilt (disregarding AM and the I Have No Mouth And I Must Scream scenario, which renders any human planning moot anyways).

Power tools did not eliminate contractors. They gave contractors better equipment and expanded the number of homeowners who understood that renovation was possible, which meant more calls to contractors, not fewer. The tools getting easier does not shrink the market for expertise, because the bottleneck was never the tools. It was the judgment gap, and I am not gatekeeping judgement. I am saying that no matter how easy it becomes, a dentist will not want to invest any amount of judgement in a dental practice product as opposed to just paying me or you to make it, AI or not.

That is not taste, it is desire specification and prioritization arbitrage. Or, to put it like a kid from the desert southwest will understand: second rodeos.

And if you don’t know why second rodeos are so important, you ain’t never been kicked by a horse.

The Constant

So what am I actually needed for? Not Investiture. Not The Box. Not Labrador. Not any specific tool or framework or scaffold. Those are System 1 artifacts. They are valuable now and ephemeral by nature. I built them and I learned from building them, and I will build their replacements, and their replacements’ replacements, for as long as the tools keep changing. Which is to say: forever. Disposable whetstones.

What I am needed for is the thing the AI cannot learn from data because it does not exist in data yet. The customer who has not been interviewed. The market that has not been validated. The assumption that has not been tested. The product that should not exist but nobody has the judgment to kill it. The product that should exist but nobody has the vision to see it yet.

I am needed for blastoff alignment. The upstream inch that determines whether you arrive at the right galaxy or the wrong one. Concept to customer, zero distance. That is what I have always sold, even before I had a name for it. Not the ship. The heading. And even if the AI can assure the heading is true and do the calculations, it is indifferent.

And the beautiful irony is this: the faster AI makes everything downstream, the more valuable the upstream becomes. When implementation is free, strategy is everything. When code is a commodity, judgment is the only remaining scarcity. Josephine, the person on the other end, the one I keep writing to, does not care which model you used or which framework you chose or how elegant your agent orchestration is. She cares that you understood her problem and built the right thing. She never cared about the ship. She cared about whether it was coming to the right place. It is kind of thrilling. The part of my work that was always the most interesting is the part that survives.

I have been saying this for 20 years: my job feels no different than it did in 1998 making $6.50 an hour, only back then I prompted a ColdFusion developer named Andrew and not an agent named Decker. I would listen to the small-business owner in Southern Utah who wanted to get online before Y2K, and come up with a small business website for them to move the needle.

With 16-color gifs.

The Only Cargo

The tools I built three months ago are already obsolete. I said that at the top of this essay, and I meant it as a confession. Now; I want to revise the framing.

They were supposed to be obsolete. They were scaffolding, not the building. The building is the judgment, desire specification, the thirty years of knowing which problems are real and which ones are artifacts of whatever tooling happens to be dominant this quarter. The scaffolding changes every season. The building stands. Disposable whetstones. It is why I have not invested too much into things like OpenClaw where you can just give it a credit card and say “build me a B2B SAAS business to make money for me, make no mistakes.”

Because even if it worked, the person didn’t learn how to survive when they are rugpulled, which they were 3 days before this article when Anthropic stopped letting OpenClaw avoid API costs by proxying through a subscription auth token; OpenClaw is already dead as it was and I never even finished my install.

Clarke’s early colonists paid the highest price for the longest journey. They spent decades in transit while faster ships were being designed behind them. But when they arrived, they were the ones who knew what the ground looked like. They had mapped it. They had argued about it. They had spent years thinking about what the destination would demand of them. That thinking was the cargo that mattered.

The later ships arrived with better engines and no understanding of the terrain. Faster transit. Same ignorance. Better ship, worse heading. The technology is the variable. The human is the constant. Build for the constant.

I don’t plan to ever reach for things that are within my grasp. That is boring, and it doesn’t build scar tissue. I’d rather be ripped to shreds by wolves than avoid the pain of tooth and claw, because so far, I’ve beat the wolves every single time, and the pain of being ripped to shreds let’s me understand what to do the next time. And, if the wolves do get me, then it’s not something I have to worry about.

If you’ve read my essays or posts before, you know there is one quote I always come back to: If our reach doesn’t exceed our grasp, then what’s heaven for?

This lyric in Anderson.Paak's "The Waters" is the first thing that popped in my head after reading your post.

"I was in the jungle running with Zulu's

We was looking past the struggle while life was moving so fast

You had to be shopping at Ginsu..."

Yes, 100% yes. And I say that as a dev building a memory system for my own agent. I was worried about it being a waste, being obsolete, being done better by someone else. But with the idea that I'm helping draw the map for myself and others by doing so? That makes it so much more valuable than just as a learning experience for myself.

On an other note, I remember reading that same Clarke story. I also remember the end and how it made me feel, even as an atheist.

There is always a cost, I guess, even for drawing the map.