Zero Stage to Orbit

The design-to-development pipeline is not broken; it is a multi-stage rocket, and we never questioned the gravity.

Digital design and development is just a baby.

Well, was a baby. We’re toddlers now, but compared to most other sciences and engineering, we’re just barely exiting the cradle. We sent people to the moon with less processing power than your average car’s key fob.

Almost all human advancement was done in the pre-digital era. Centuries. Millennia. Eons. Most of the people responsible for modern computing workflows are still alive.

However, like most babies, we’re afraid to leave the cradle. But like all babies, eventually, we have to leave the cradle. And I think we are ready.

We’re going to be okay, and we’re going to outgrow this cradle together.

Two People You’ve Never Heard of But Know Very Well

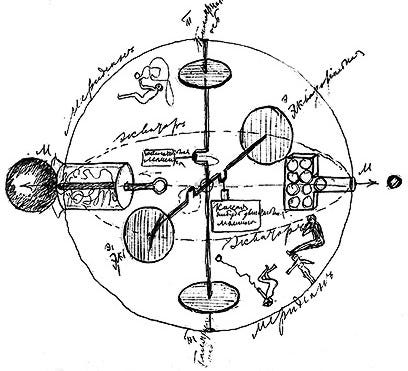

In 1903, a deaf Russian schoolteacher named Konstantin Tsiolkovsky published a paper proving that a single rocket could never carry enough fuel to reach orbit. The fuel itself was too heavy. You needed fuel to carry fuel, and fuel to carry that fuel, and the math was merciless. His solution: staging. Burn a section, drop the dead weight, burn the next. It was inelegant. It worked. It became the only way to reach space for the next 120 years.

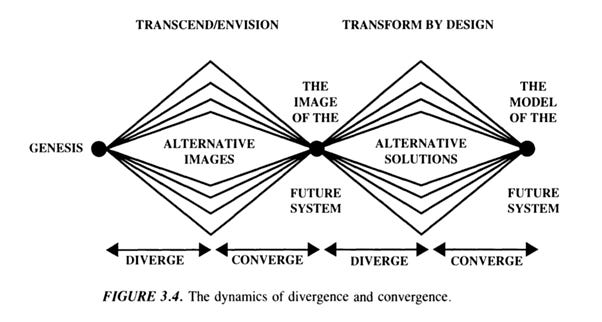

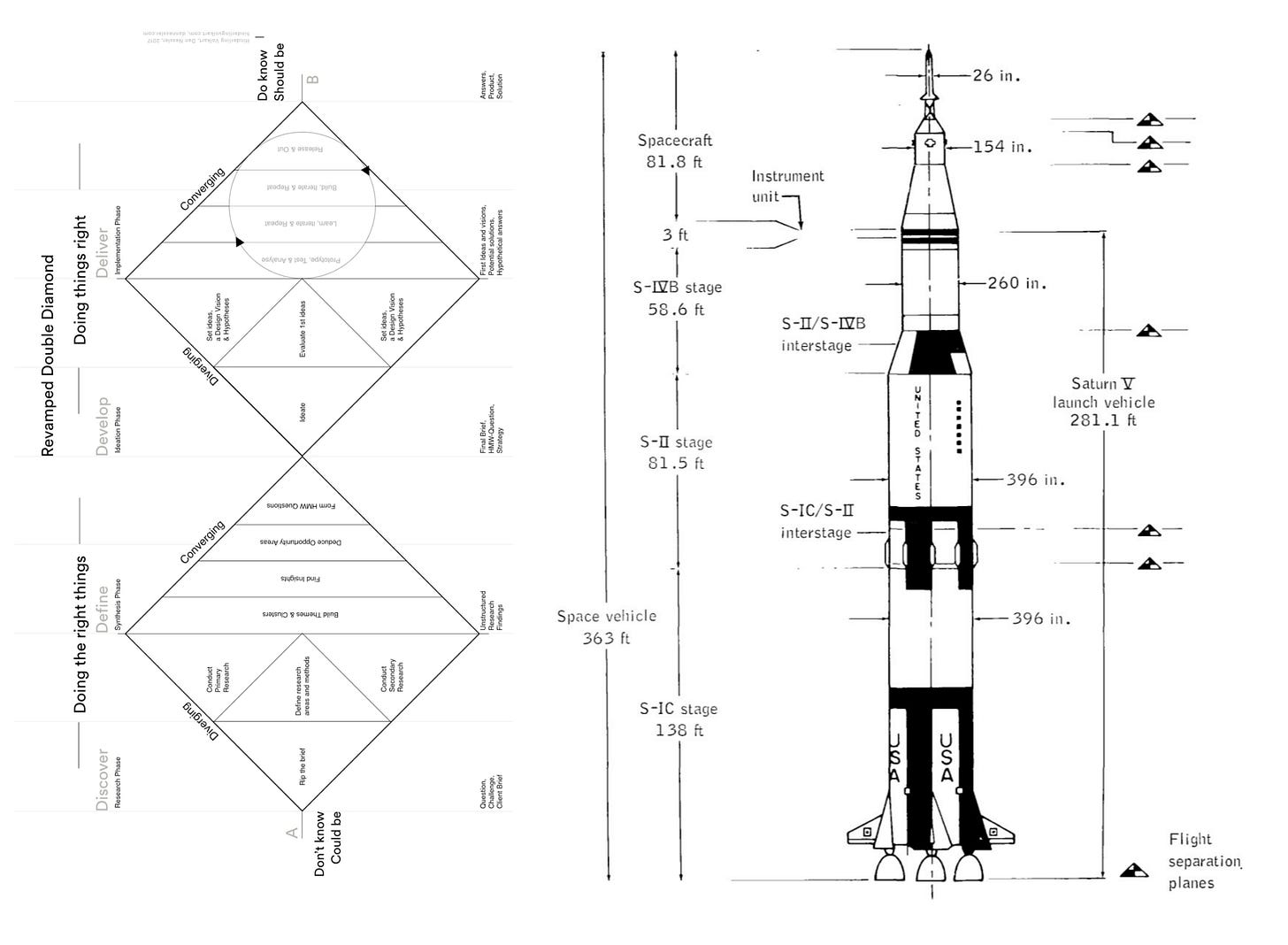

In 1996, a Hungarian-American linguist named Béla Bánáthy published a model for how social systems change. Two phases: diverge to explore the problem widely, converge to focus on action. It was a thinking tool for understanding how complex human systems evolve. Nine years later, the British Design Council adapted his model into the Double Diamond. Discover, Define, Develop, Deliver. It gave designers a shared language for how work actually moves from problem to solution. It was clear. It was useful. It became the way things are done.

Both solved what felt like an impossible problem. Both delivered their payload. And both became so foundational that questioning them felt like questioning gravity itself.

Wait. We’ve seen this before; turn the Double Diamond on its side… no way:

Hold the fort! Is… is design thinking just multi-stage rocketry? Oh heavens, what have we done?

THE DOUBLE DIAMOND THE SATURN V

◇◇◇◇◇ ╔═══╗

◇ ◇ ║ ║

◇ ◇ Stage 1 ║ ║ Stage 1

◇ ◇ (Discover → ║ ║ (Boost →

◇◇◇◇◇ Define) ╠═══╣ Separate)

◇ ◇ ║ ║

◇ ◇ Stage 2 ║ ║ Stage 2

◇ ◇ (Develop → ║ ║ (Boost →

◇◇◇◇◇ Deliver) ╠═══╣ Separate)

│ ║ ║

▼ ║ ║ Stage 3

payload ╚═╤═╝

▼

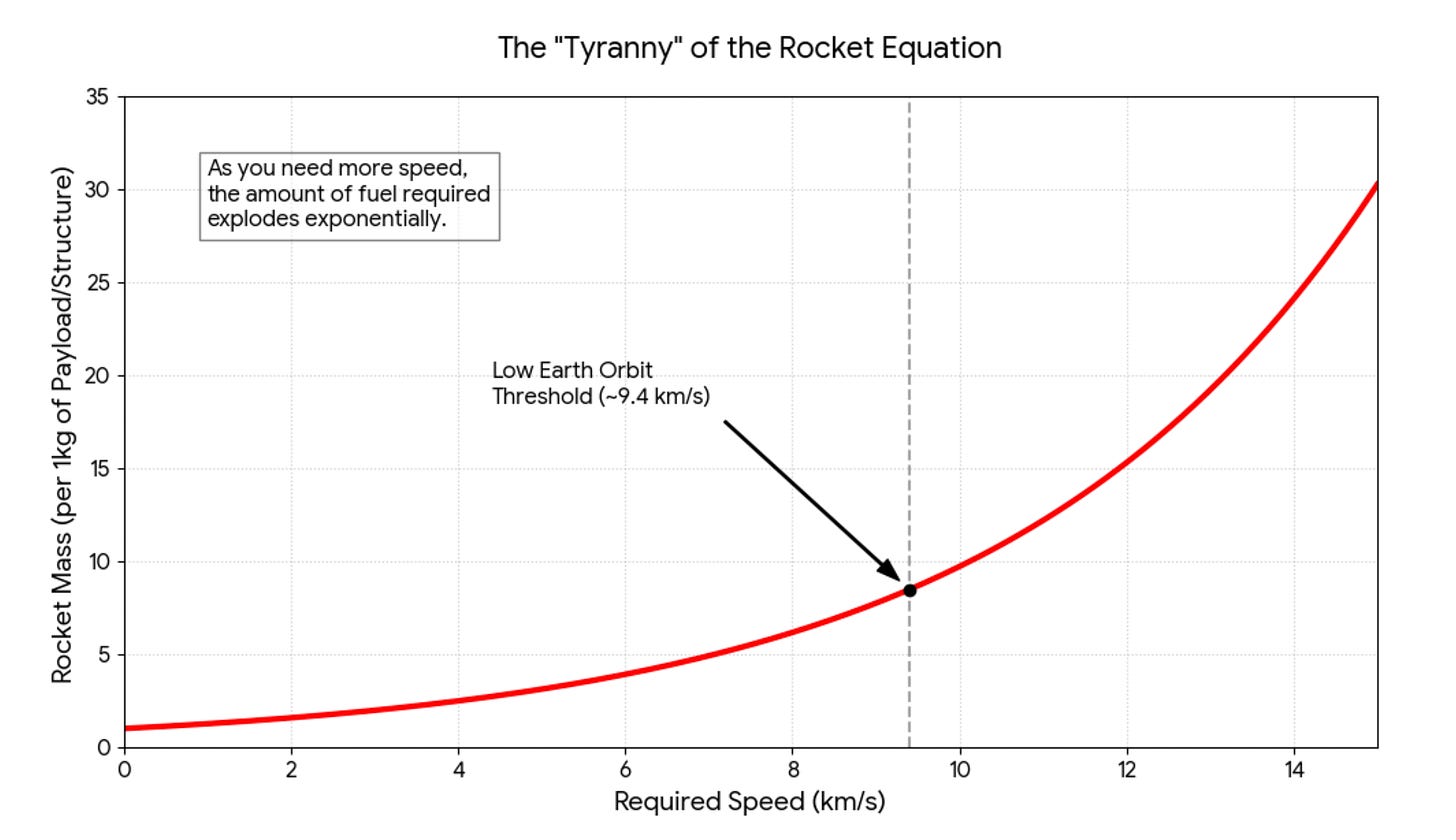

payloadThe Tyranny of the Rocket Equation

The rocket industry has a legendary impossible problem. It is called single-stage-to-orbit (SSTO), and it has been the holy grail of spaceflight for decades. The dream: a vehicle that launches from the ground and reaches orbit in one continuous burn. No boosters. No jettisoned fuel tanks. No staging sequences. One machine, ground to orbit, clean and elegant.

It is effectively impossible (especially with useful payload and reusability) with chemical rockets.

The reason is beautiful in its cruelty. To reach orbit from Earth’s gravity well, you need fuel. But fuel has mass. And mass requires more fuel to lift. Which adds more mass. Which requires more fuel. The Tsiolkovsky rocket equation is a tyrant: every kilogram of payload demands exponentially more propellant, and most of that propellant exists only to carry other propellant. Fuel to carry fuel to carry fuel.

So the entire industry built itself around the workaround: multi-stage rockets. You burn a stage, drop the dead weight, burn the next stage, drop that. Saturn V had three stages. Falcon 9 recovers its first. The whole architecture of spaceflight (the launch pads, the recovery ships, the mission control protocols, the thousands of engineers managing staging sequences) exists because we cannot escape the overhead of launching from the bottom of a gravity well.

This is not about rockets.

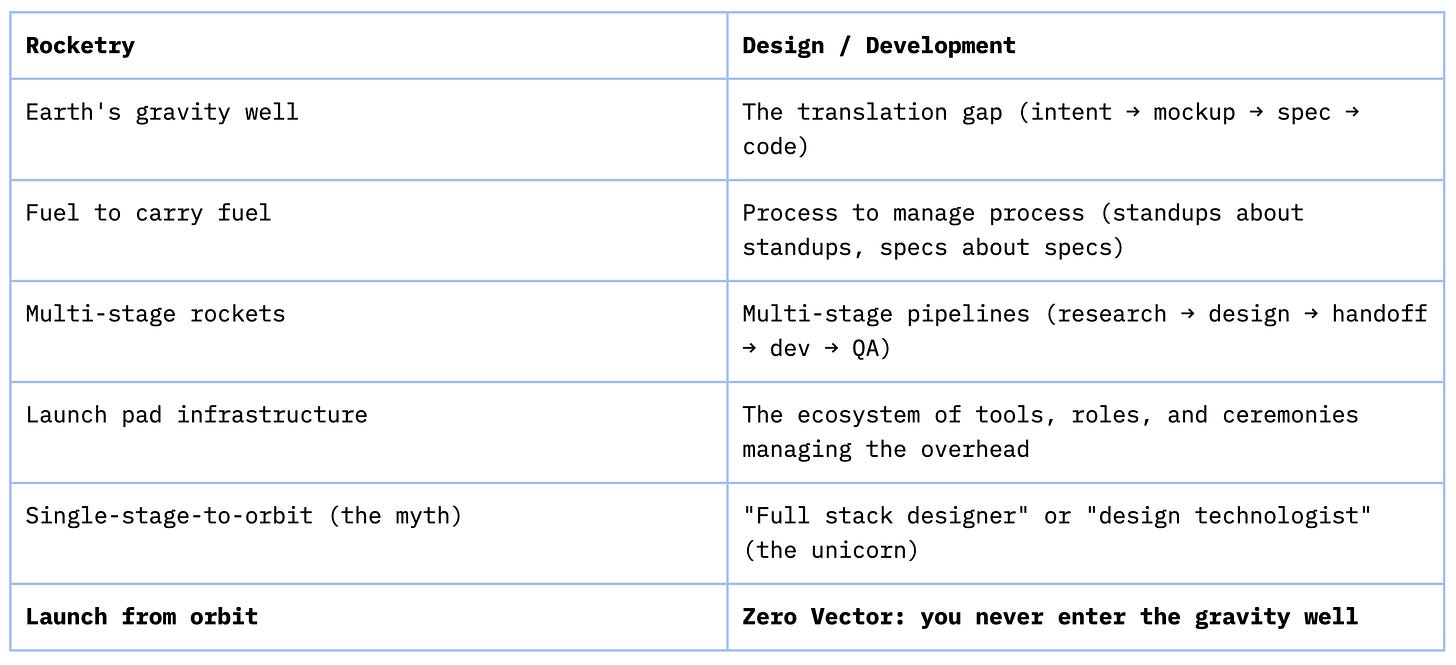

This is about the design-to-development pipeline. And the mapping is precise:

Every stage in the traditional pipeline exists to compensate for the limitations of the previous one. Research to inform design. Design to spec for developers. Specs to survive handoff. QA to catch what handoff broke. Retros to discuss why QA caught so much. Process to manage process.

Fuel to carry fuel. The modern development pipeline is not a solution. It is a multi-stage rocket. And most of the energy is going to overhead.

The Launch Pad Economy

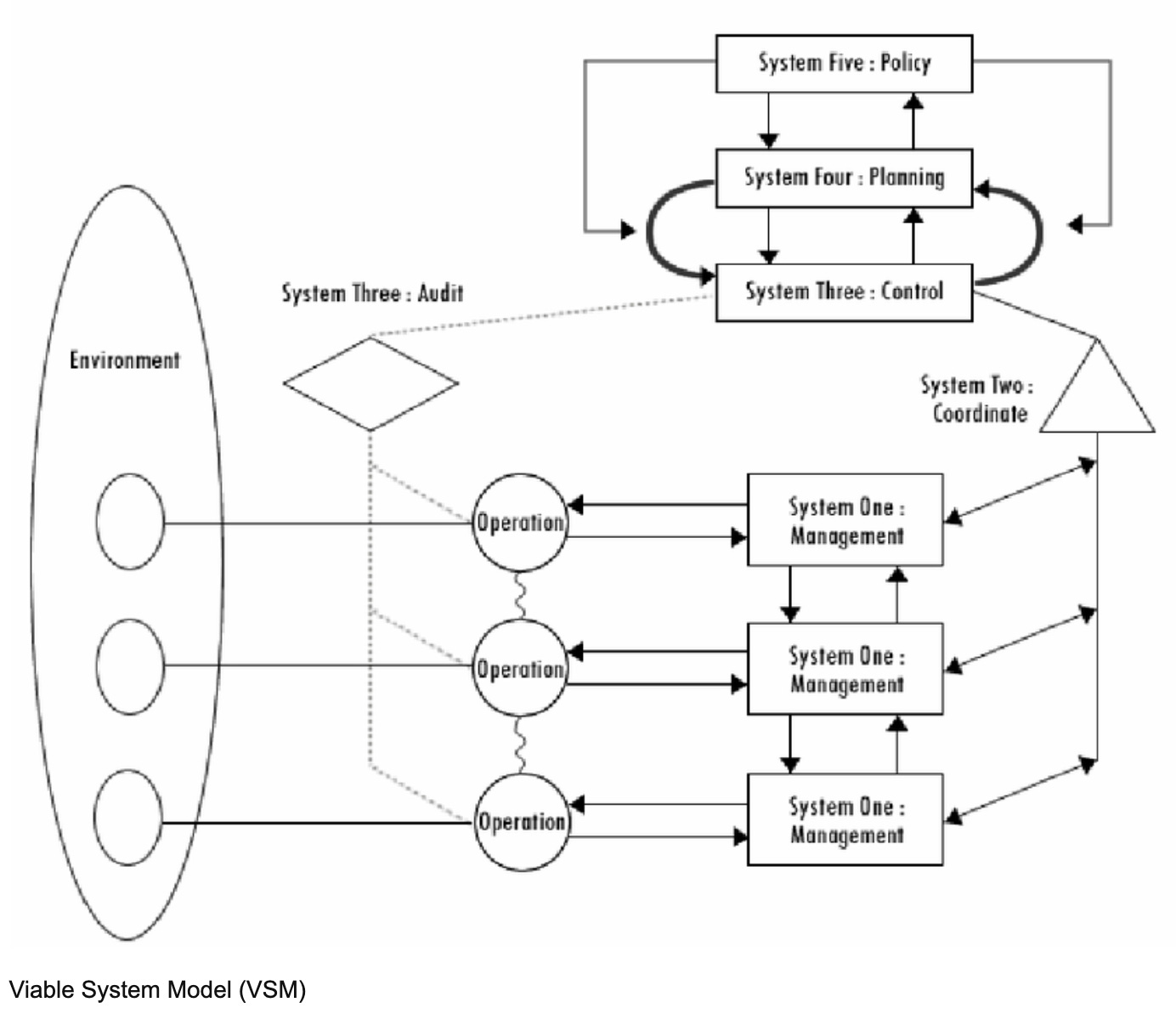

Stafford Beer mapped this architecture in 1972, though he was describing organizational cybernetics rather than rockets. His Viable System Model showed how complex organizations manage themselves: layers of policy, intelligence, control, and coordination stacked above the operational units that do the actual work. Each layer communicates through artifacts. Each handoff introduces signal loss. The distance between intent and execution is maximum by design.

THE VIABLE SYSTEM MODEL (Beer, 1972)

POLICY → INTELLIGENCE → CONTROL → COORDINATION

↓ ↓ ↓

┌─────────────────────────────────────────────┐

│ OPERATIONS │

│ Unit A → Unit B → Unit C → Unit D → Unit E │

│ ↕ ↕ ↕ ↕ │

│ artifact artifact artifact artifact │

│ (signal (signal (signal (signal │

│ loss) loss) loss) loss) │

└─────────────────────────────────────────────┘

Distance between layers: maximum

Translation artifacts: many

Signal loss per handoff: cumulative

Beer was not criticizing this. He was describing it. The model works. It has worked for decades. And an enormous economy has grown around making it work smoother.

Think about what exists because we launch from the ground. Project managers are staging sequence coordinators, managing the transitions between research, design, development, and QA. Without the stages, the coordination role transforms (it does not disappear, but the overhead coordination portion evaporates). Design systems became fuel standardization: not the craft of creating coherent visual language (that remains essential) but the bureaucratic layer of maintaining Figma libraries as translation documents between designers and developers. Sprint ceremonies are mission control. Handoff documentation is payload fairing, the protective shell that keeps intent from burning up during the transition between stages.

Take a walk with me through the design translation graveyard era by era:

The Handoff Era:

Zeplin (the original “inspect mode” bridge between Figma and dev)

Abstract (version control for Sketch files, because designers needed Git but could not use Git)

InVision (prototype links emailed to stakeholders who never clicked them)

Red Line specs (before Zeplin automated it, people literally drew red lines on screenshots with pixel measurements)

Avocode (You don’t even remember this one)

The Spec Document Era:

Axure RP (200-page interactive specs nobody read past page 12)

Balsamiq (wireframes as a deliverable, not a thinking tool)

OmniGraffle (the Mac-only flowchart tool that felt like a religion)

The Coordination Overhead Era:

Basecamp (the original “where did the decision live?”)

Confluence (where documentation went to die)

JIRA (the story points industrial complex)

Rally (I can’t think of a clever thing to say)

Microsoft Visio (every flowchart that ever lied about how a system actually worked)

Requisite Pro (IBM requirements management, pure overhead in a box)

The Prototype-as-Proof Era:

Flash/Macromedia Director (interactive prototypes that were harder to build than the actual product)

Dreamweaver (the original “design in the browser” lie)

Frontpage (we do not speak of this)

GoLive (go away)

Not to mention the primary tool genealogy of Corel, Photoshop, Fireworks, Sketch, Framer, Affinity, Figma…

None of these are bad. All of them are overhead. And here is the thing: an entire economy is built on maintaining this overhead. Design system consultancies. Handoff workflow vendors. Agile coaching practices. Process improvement certifications. Conference circuits dedicated to making the multi-stage rocket more aerodynamic.

The launch pad industry does not want you to launch from orbit. Because the launch pad industry does not exist in orbit.

The Single-Stage Myth

Before orbit was available, there was an earlier attempt to escape the gravity well. The industry called them “design technologists” or “full stack designers.” The SSTO dream, translated to human form: one person who could do research, design, front-end development, sometimes back-end, testing, deployment. All stages in one body. No handoffs. The unicorn.

And like SSTO with chemical rockets, it was a physics problem.

One human being could not span all of those disciplines at production quality. The fuel was too heavy. You could design and code, but your designs lacked the depth of a specialist and your code lacked the rigor of a dedicated engineer. You could research and prototype, but neither at the level a focused team would deliver. The cognitive load of maintaining fluency across that many domains was the fuel-to-carry-fuel problem in human form.

So people tried it. They burned out. They produced stretched-thin work across too many fronts. And the industry responded with a verdict that sounded like wisdom: “See? You need specialists.” Which was really: “See? You need stages.” Which was really: “See? You need us.”

Let’s do a roll call of everyone needed to manage the process of processes:

The Translation Roles:

UX Designer (vs. UI Designer vs. Visual Designer vs. Interaction Designer vs. Product Designer)

Design Technologist (the SSTO unicorn who burned out)

Frontend Developer (the person who translates the mockup)

UI Engineer (when “frontend developer” was not specific enough)

Design Engineer (the latest attempt at SSTO, same physics)

Creative Technologist

The Coordination Roles:

Product Manager (the human API between business, design, and engineering)

Project Manager (the human API between the team and the timeline)

Program Manager (the human API between projects)

Scrum Master (professional ceremony facilitator)

Agile Coach (coaching people to do ceremonies better)

Delivery Manager (what)

Release Manager (what)

Technical Program Manager (this is probably the one that survives)

The Handoff Roles:

Business Analyst (translates business needs into requirements documents)

Systems Analyst (translates requirements into technical specs)

Solutions Architect (translates technical specs into system design)

QA Engineer (catches what the handoffs broke)

QA Analyst (writes test cases from specs that already drifted from intent)

UAT Coordinator (manages the meeting where stakeholders see what they asked for and realize it is not what they meant)

The Design System Bureaucracy:

Design System Lead

Design System Engineer

Design Ops Manager

DesignOps (the entire discipline)

Design Tokens Specialist (yes, this is real)

Component Library Maintainer

Figma Librarian (we all know one)

The Process Management Layer:

Sprint Facilitator

Retrospective Facilitator

Kanban Flow Manager

Value Stream Mapping Consultant

Lean UX Coach

DevOps Engineer (when the deployment pipeline needed its own specialist)

Platform Engineer (when DevOps needed its own specialist)

SRE (when Platform Engineering needed its own specialist)

But the diagnosis was wrong. Not about the symptom (the burnout, the mediocrity across disciplines) but about the cause. The design technologist did not fail because one person cannot hold all the skills. The design technologist failed because one person cannot hold all the skills while still fighting gravity. They were still launching from the ground. Still hauling the translation overhead, just with one person doing all the hauling instead of a team.

The problem was never the number of stages. It was the gravity well.

You Were Never Supposed to Launch From Here

This is where the rocketry lens reveals something the standard “AI changes the pipeline” framing misses.

I have written about Zero-Vector Design before: the elimination of intermediary translation tools between intent and artifact. The website demo. The diamond breathing differently. All of that holds. But the rocketry metaphor reframes it. This is not a procedural issue. It is a structural one.

The gravity well is the translation layer itself. Not any particular tool or handoff or ceremony, but the fundamental architecture of separating “the person with the intent” from “the artifact the customer touches.” Every process improvement, every better handoff template, every tighter sprint cadence is optimizing the rocket. Building a more efficient multi-stage vehicle to escape the same gravity.

Zero Vector does not build a better rocket. It eliminates the launch.

THE ZERO VECTOR SYSTEM MODEL (Flowers, 2026)

┌──────────┐

│ RESEARCH │

└────┬─────┘

╱ ╲

┌─────┐ ┌─────┐

│SHIP │ │FRAME│

└──┬──┘ └──┬──┘

│ ┌──────────┐ │

│ │ OPERATOR │ │

│ │ + │ │

│ │ AGENTS │ │

│ └──────────┘ │

┌──┴──┐ ┌──┴──┐

│TEST │ │BUILD│

└─────┘ └─────┘

╲ ╱

└──────────┘

Distance between layers: zero

Translation artifacts: none

Signal loss: n/a (no handoffs)

When the person with the vision operates directly through AI agents (researching, designing, building, testing, shipping in a continuous loop) the stages collapse. Not because the disciplines become irrelevant. Research still matters. Architecture still matters. Testing absolutely still matters. But the handoffs between roles, the translation artifacts, the distance between what you meant and what gets built: that collapses to zero.

Multi-stage: Research team writes report. Designer interprets report into wireframes. Developer interprets wireframes into code. QA finds the gaps between wireframes and code. Everyone meets to discuss the gaps. Another sprint begins.

From orbit: You research it. You design it. You build it. You test it. You ship it. Same mind. Same session. Zero translation.

You are not in the gravity well looking up. You are in orbit, and from orbit the question is genuinely puzzling: why would you descend to the bottom of a gravity well just to launch back up again?

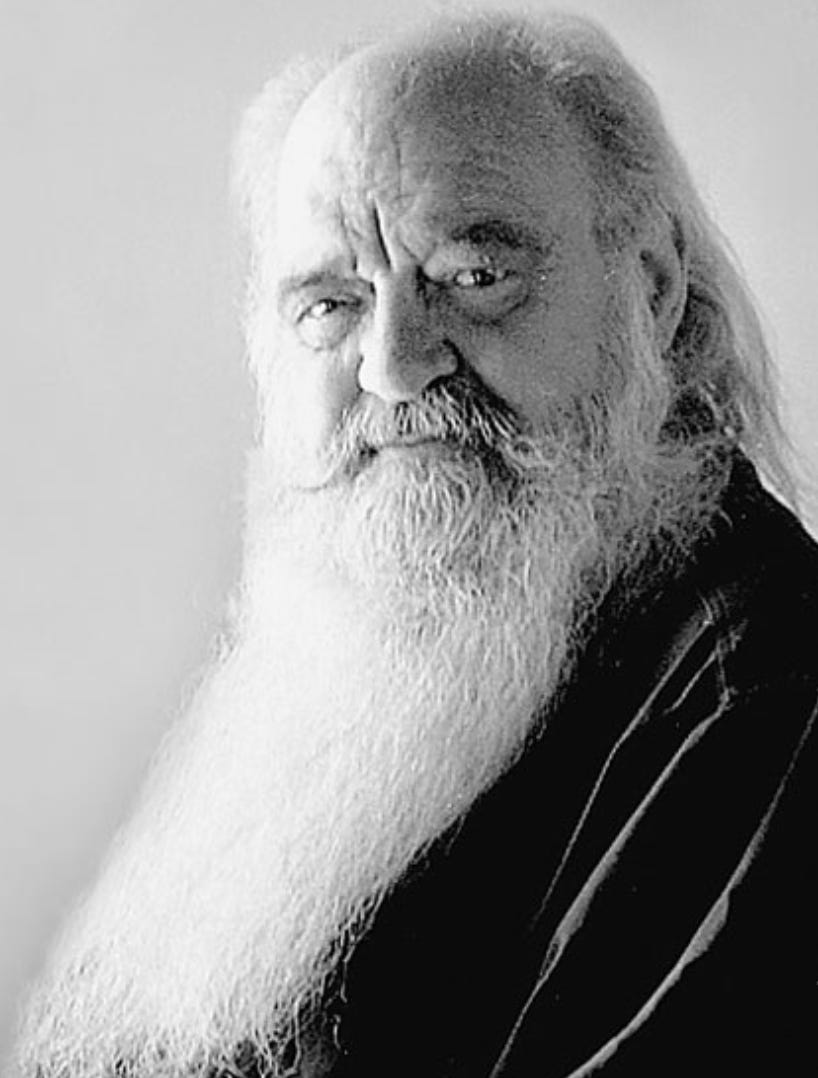

I am brought back to one of my favorite books, Arthur C. Clarke’s “The Fountains of Paradise” where the moment humans learn to build the space elevator, the constraints of the gravity well go away and we gain access to the universe.

Rational Actors Defending Their Gravity Well

The resistance to this framing is not intellectual. It is economic. And I need to be precise here, because this is the section where the argument risks sounding like it attacks people. It does not.

Look at who benefits from the multi-stage rocket. Not the practitioners (they are exhausted by it). Not the customers (they receive intent degraded by four handoffs). The beneficiaries are the infrastructure providers. The companies selling launch pad equipment.

Design system consultancies that charge six figures to build and maintain component libraries which are, at their core, translation documents between design and engineering. Handoff workflow tools that monetize the gap between Figma and production code. Agile coaching firms that sell process optimization for the staging sequences. Sprint planning software. QA automation suites built specifically to catch translation errors introduced by staging. Conference circuits dedicated entirely to making ceremonies more efficient, handoffs cleaner, burndown charts more precise.

The institutionalization of all of us is so complete, we don’t remember, recall, or imagine a time or future where this isn’t necessary. Like every Kafkaesque nightmare, it feels normal because it is all we know.

I want to be careful. The people in these roles are skilled, thoughtful, and operating rationally within the system as it exists. A launch pad engineer is not a villain for building launch pads when every rocket on Earth needs one to fly. These professions emerged to solve real problems and they solved them well.

But when orbit becomes available, the launch pad engineer faces a structural question. Some of that expertise translates directly: you still need quality thinking, architectural judgment, someone who knows what “good” looks like and will not ship until they see it. Some of it does not. The coordination overhead, the handoff management, the translation layer itself: that portion evaporates. And the people who built their professional identity around managing overhead will, naturally, defend the overhead. Not because they are bad actors. Because they are rational actors in a system that rewarded overhead management for decades.

The system was rational. The gravity well was real. The overhead was necessary.

Until it was not.

THE COLLAPSE

Beer (1972): A → B → C → D → E

↑ ↑

artifact artifact

(signal (signal

loss) loss)

Multi-stage: A → B → C → D → E

↑ ↑ ↑

fuel fuel fuel

(to carry fuel to carry fuel)

Zero Vector (2026): ┌───┐

│ * │

└───┘

all functions

same operator

same session

zero distance

* = Systems Auteur + Agent Crew

Every “process improvement” in the traditional pipeline is building a more efficient multi-stage rocket. Better handoffs. Cleaner specs. Tighter sprint ceremonies. And none of it questions whether we should still be launching from the ground.

Field Notes From Orbit

Theory is comfortable. Let me tell you what it actually looks like.

I build Fictioneer, an AI-powered story development platform, with a crew of AI agents. Not a metaphorical crew. An actual operating team: a strategist, a frontend engineer, a backend specialist, a researcher, a marketing lead, a content czar. Each agent holds domain expertise, follows established patterns, and works through structured workflows. I am the operator. They are the crew.

There is no design-to-development handoff because there is no gap between design and development. Intent moves through the agents into the artifact. Research flows into architecture flows into code flows into testing flows into deployment. One session. Zero distance. No translation artifacts.

This isn’t a utopia, and the space elevator and orbital launches have their own entire set of dangers, challenges, and costs. It isn’t a panacea, it is just the next step in the evolution. A new set of problems to solve, which is I think better than looping around the same set of old problems over and over.

And I need to be honest about what orbit costs, because I do not want to sell paradise. I have written about this at length: the loneliness of building at forge temperature, the offers of collaboration that evaporate when the heat arrives. That is real, and orbit does not fix it.

There is no team standup where someone catches the flaw in your data model. No design review where a colleague pushes back on your navigation architecture. The quality gates you would normally distribute across five people, you hold alone (with agent support, but still). That is not nothing. (And honestly, some days it is a lot.)

But here is what changes. In the multi-stage rocket, most of your energy goes to hauling fuel. Coordinating handoffs. Managing translations. Writing specs that survive the gap between what you meant and what someone else builds. In orbit, all of your energy goes to the work itself. The research. The design. The architecture. The craft. The stuff that actually reaches the person on the other end.

That is not a small difference. That is a different physics.

Why Are You Launching From the Ground?

The rocket equation is a tyrant, and the entire spaceflight industry organized itself around submission to that tyranny. Launch pads. Staging sequences. Recovery ships. Mission control. All of it built to manage a constraint that was, for decades, immovable.

But the constraint moved.

And now the question is not how to build a better multi-stage rocket. Not how to optimize the handoffs, improve the sprint cadence, or produce cleaner translation documents between design and development. Those are all answers to a question that stopped mattering.

The question is: why are you still launching from the ground when orbit is available?

I am not being rhetorical. I am genuinely asking. If the translation layer is the gravity well, and AI agents collapse the distance between intent and execution to zero, and the skills that actually matter (research, design thinking, architectural judgment, taste, craft) are more critical in orbit than they ever were on the ground, then what is the argument for building another multi-stage rocket?

And look, I was at NASA when the Artemis II missions were being planned, and I had contact with those teams in my role as an IT Specialist / Digital Service Expert. The SLS is reusing the approach developed in the 60’s and 70’s. Many criticize it. I am finishing this article on Feb 21st 2026, launch date is slated for March 6th 2026. I want to see Artemis succeed as much as anyone else in the agency.

But, most of us aren’t actually launching rockets (at least, I’m not anymore), we’re shipping SAAS, we’re shipping mobile apps, we’re shipping consumer-grade digital products. So why do we cling to the multi-stage methods of the past?

Comfort, maybe. Familiarity. The reasonable fear that orbit is just another SSTO dream that will flame out on the way to space. The sunk cost of a career organized around staging sequences. Those are fair concerns.

The fuel ratio is still the same. The overhead is still overhead. The translation tax still compounds with every handoff. And the tools that make orbit possible are not theoretical anymore. They exist. They work.

The gravity well was real. The infrastructure was necessary. The industry that grew around it was rational and built by smart people solving genuine problems.

And, I am also uncertain here, also mid-journey, also discovering orbit’s real constraints in real time. My career, work, and livelihood are just as much at risk as everyone else’s. But, that doesn’t discount the facts about the transition to new capabilities.

And now that orbit is available, the launch pad is optional.

The gravity well is just a cradle. And no one stays in the cradle forever.

Continue with part 2, Mutually Assured Construction

This is a genuinely compelling framing. The rocket equation as a metaphor for translation overhead is sharp, and uncomfortable in precisely the right way. The idea that most of our energy goes into hauling “fuel to carry fuel” will resonate with anyone who has spent years navigating research decks, design specs, Jira rituals and QA loops.

You are absolutely right that the translation tax is real. Signal loss compounds. Artefacts multiply. Entire tool economies exist because intent and execution live in different bodies. Once you see that, it is difficult to unsee.

What I find particularly strong is your reframing of AI not as acceleration but as structural collapse. Not a better rocket, but the elimination of launch. That distinction feels important.

At the same time, I find myself wanting to explore a few edges of the orbit model, not in opposition, but as an extension of what you are proposing.

First, quality gates.

Handoffs are inefficient, yes. But they also introduce epistemic friction. A developer misreading a design is costly. A developer questioning an assumption can be invaluable. Much of what we call overhead in organisations also functions as distributed scepticism. It surfaces blind spots, challenges architectural decisions, and forces reasoning to become explicit. If Zero Vector collapses translation distance to zero, how do we preserve the generative function of dissent? Agents can simulate expertise, but can they simulate real disagreement or social resistance in the same way a colleague does?

Second, infrastructural dependency.

Orbit today is not tool neutral. It depends on proprietary models, API access, and centralised compute. Multi stage pipelines are cumbersome but comparatively robust. Zero Vector is elegant but potentially fragile. What happens if model behaviour shifts, pricing structures change, or regulation tightens? The gravity well was heavy, but it was locally controlled.

Third, learning.

What struck me in your writing on zerovector.design is that the real shift is not only the removal of translation, but the removal of delay between thought and experiment. Rapid iteration. Thinking through making. Immediate feedback loops. Those effects are not exclusively AI dependent. They are cognitive shifts. Perhaps the deeper transformation is not zero translation, but zero latency between intent and exploration.

And then there is the organisational question.

The Systems Auteur is a powerful image. But companies are coordination systems, not authors. If orbit becomes viable, does it replace organisations, or does it create micro orbits within them? It is easy to imagine intrapreneurial units, small AI augmented pods operating in exploratory mode, reducing the cost of experimentation without dissolving governance, compliance, or strategic alignment. Orbit might not eliminate organisation. It might reconfigure it.

None of this weakens your core point. The translation layer has shaped an entire industry. Many roles exist primarily to manage the distance between intent and artefact. If that distance collapses, the architecture of work changes.

Perhaps the real question is not whether orbit is possible, but what new forms of quality, resilience, and collective intelligence we will need once we are there.

Thank you for articulating something many of us have sensed but not yet named.